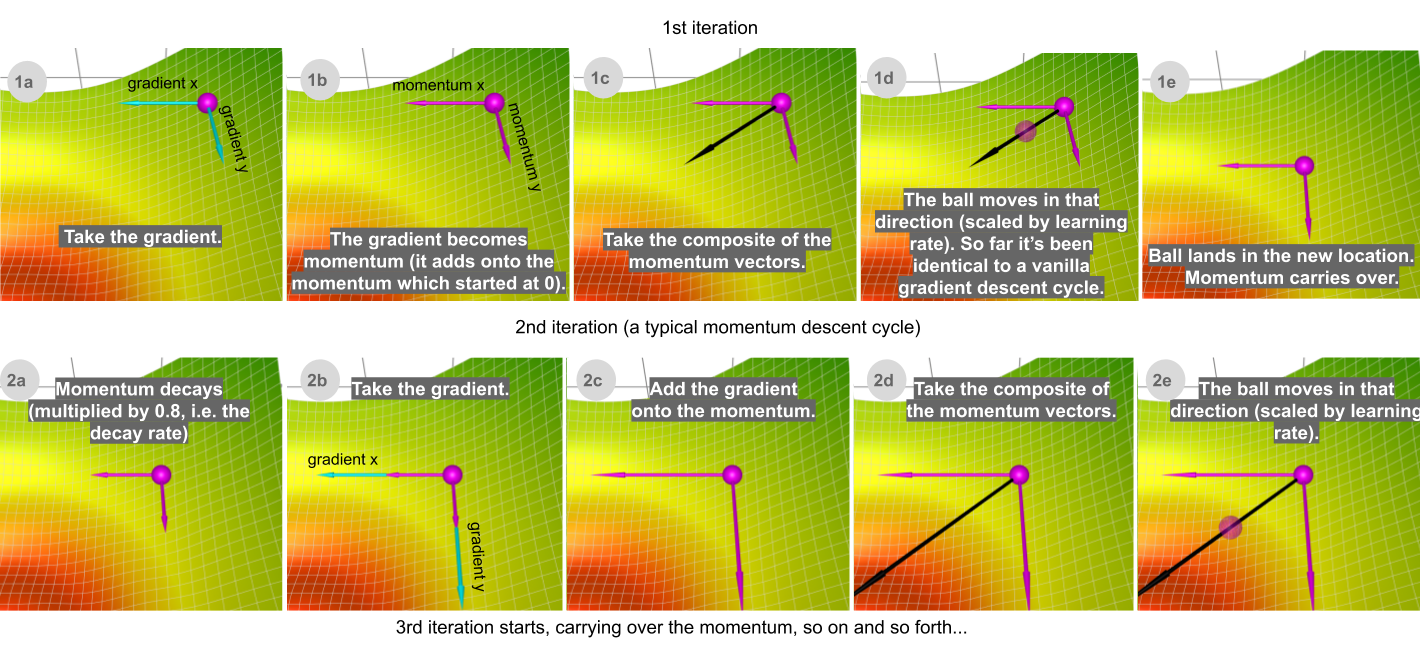

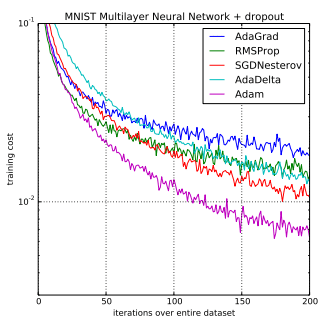

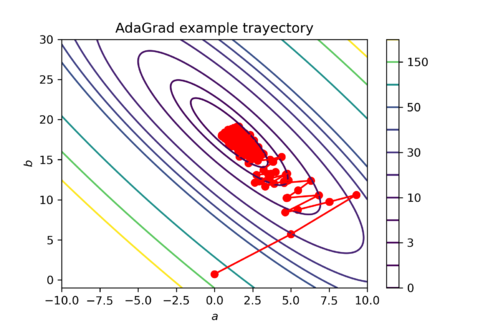

A Visual Explanation of Gradient Descent Methods (Momentum, AdaGrad, RMSProp, Adam) | by Lili Jiang | Towards Data Science

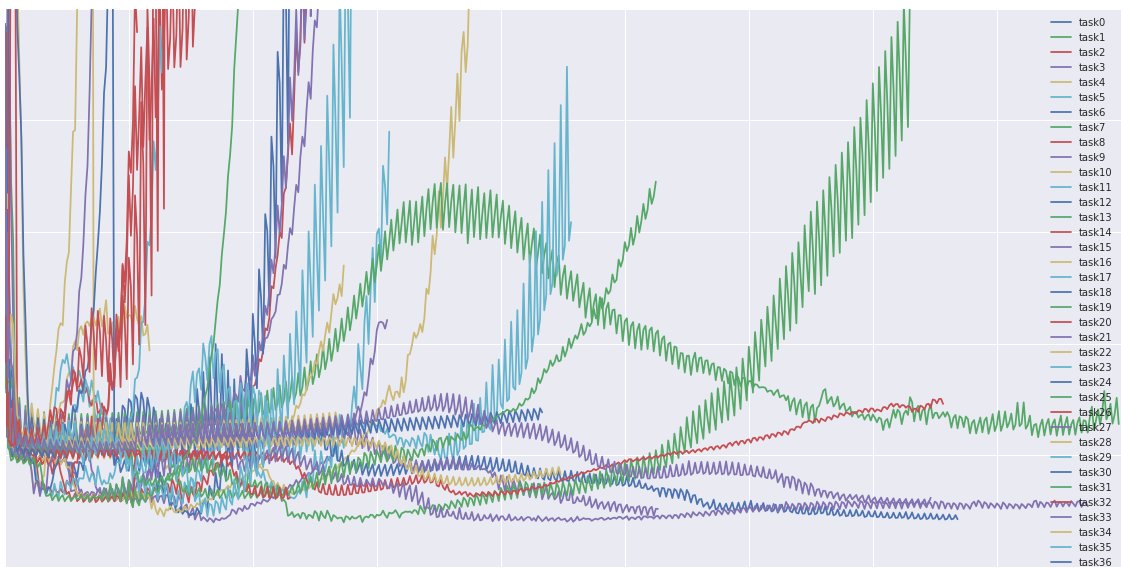

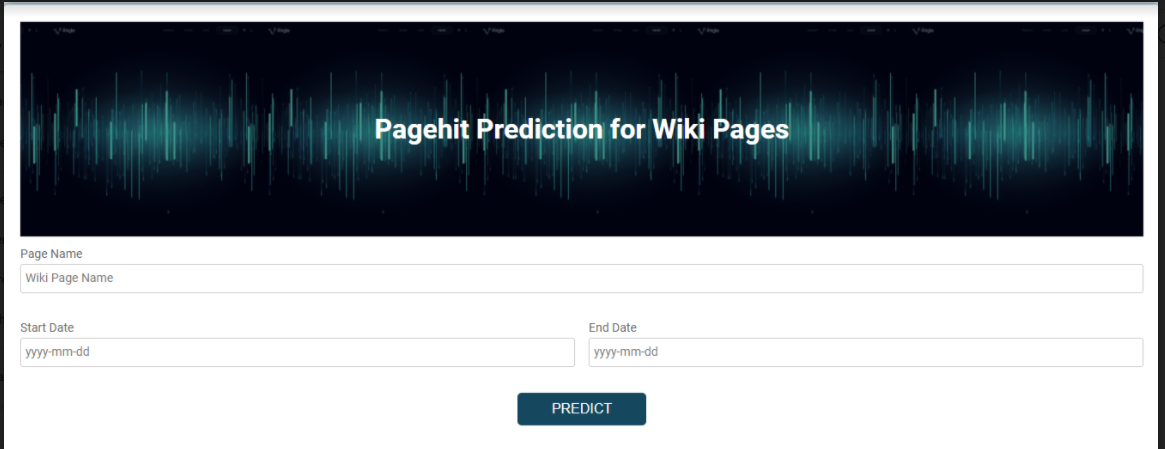

Web Traffic Time Series Forecasting — Forecast future traffic for Wikipedia pages | by Prerana | Analytics Vidhya | Medium

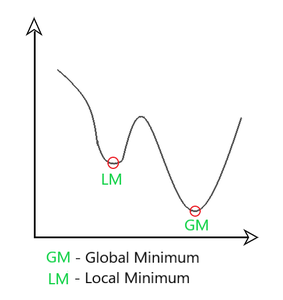

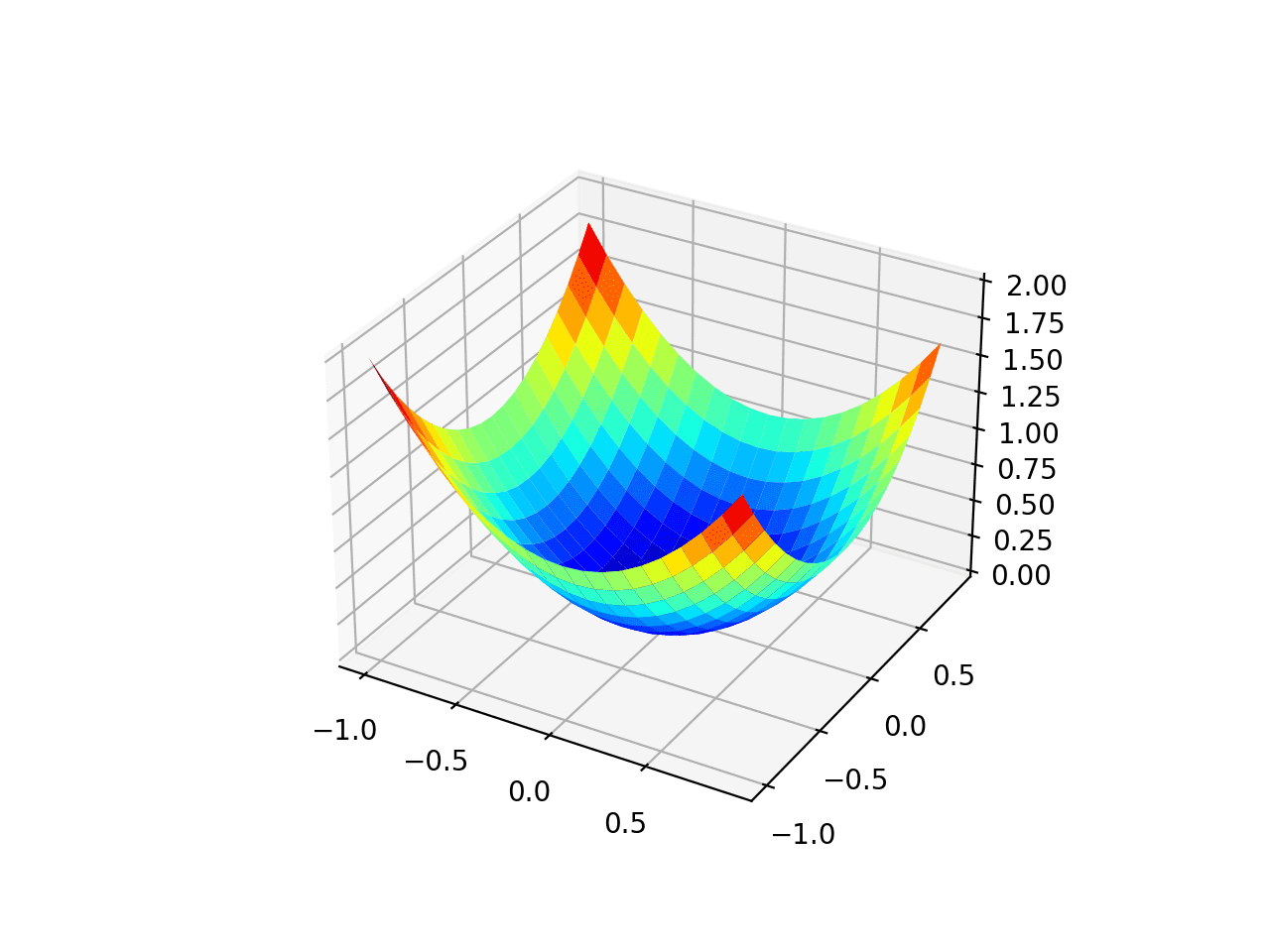

A Visual Explanation of Gradient Descent Methods (Momentum, AdaGrad, RMSProp, Adam) | by Lili Jiang | Towards Data Science

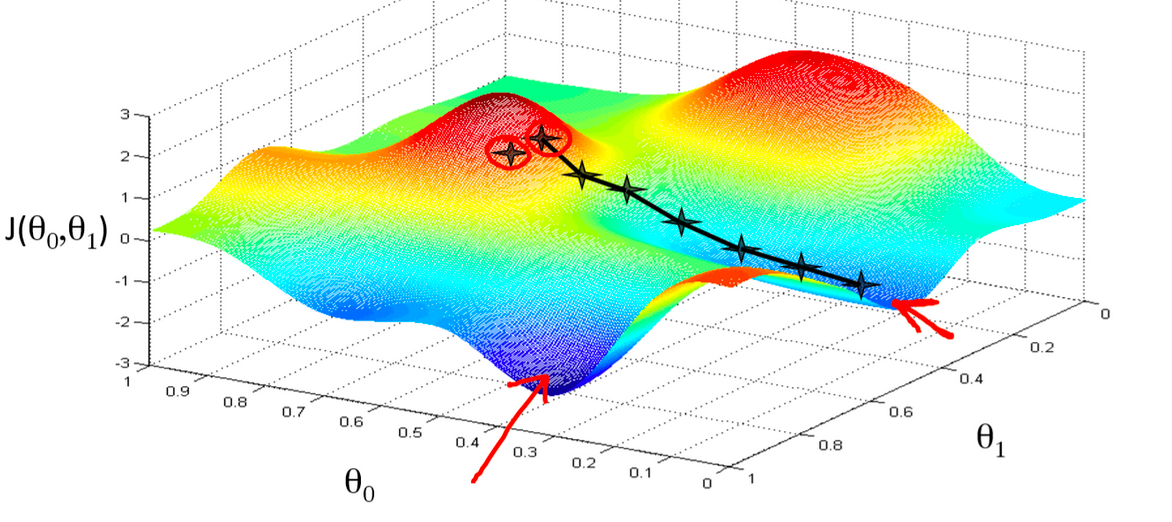

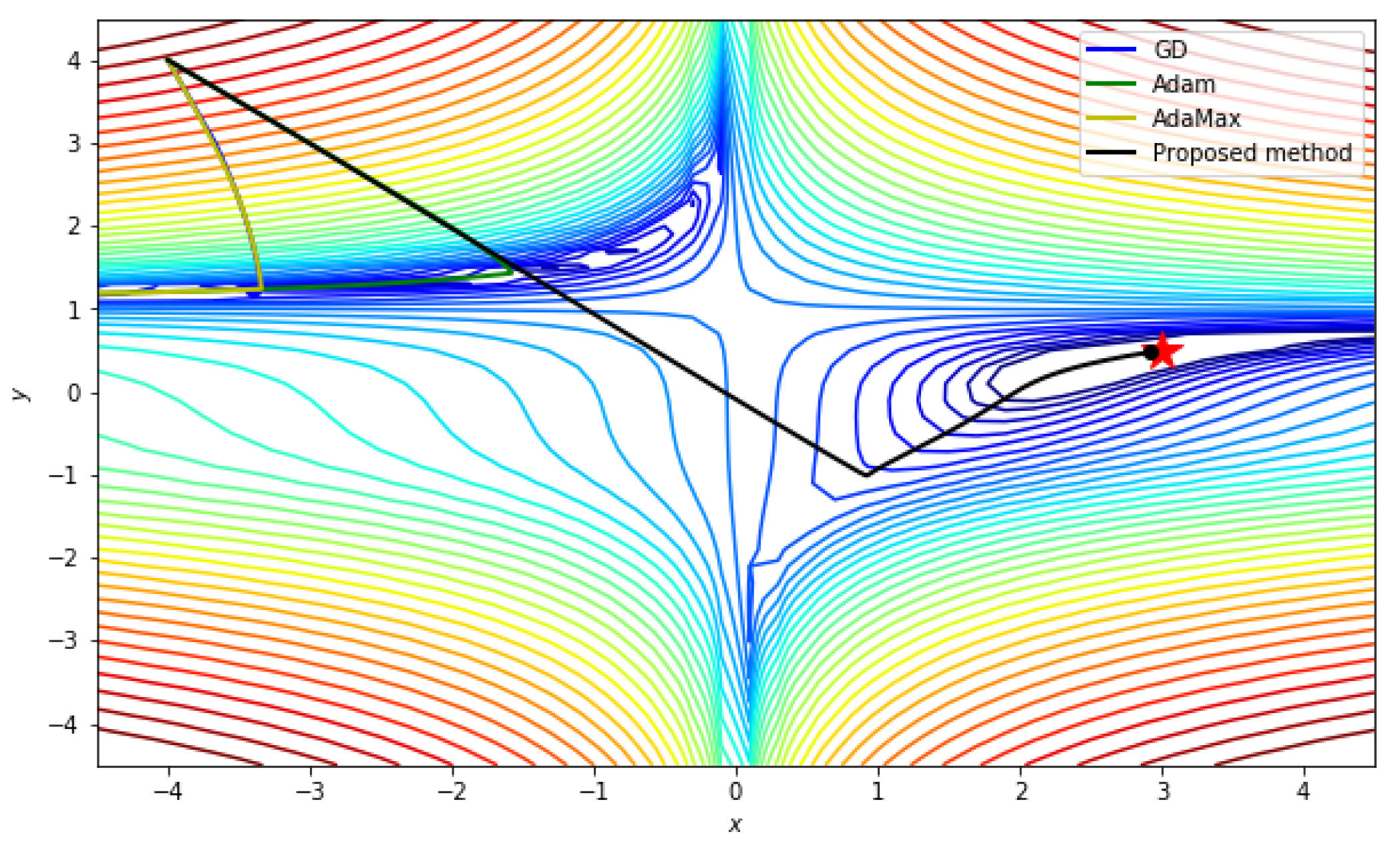

From SGD to Adam. Gradient Descent is the most famous… | by Gaurav Singh | Blueqat (blueqat Inc. / former MDR Inc.) | Medium

Gentle Introduction to the Adam Optimization Algorithm for Deep Learning - MachineLearningMastery.com

![PDF] Transformer Quality in Linear Time | Semantic Scholar PDF] Transformer Quality in Linear Time | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/dc0102a51a9d33e104a4a3808a18cf17f057228c/13-Table7-1.png)